Build

One agent. Reads the diff, applies the conventions, drafts the comments.

ADK · Agent Studio · Model Garden

BLUEPRINT · ENGINEERING · CROSS-VERTICAL

Catch defects before review, draft fixes, ship faster with audit.

Catch defects before review, draft fixes, ship faster with audit. Named scope, named timeline, named stack — ADK · Memory Bank · Evals · 3 weeks.

Design ceiling

Routine review feedback drafted before the human reviewer reads the diff.

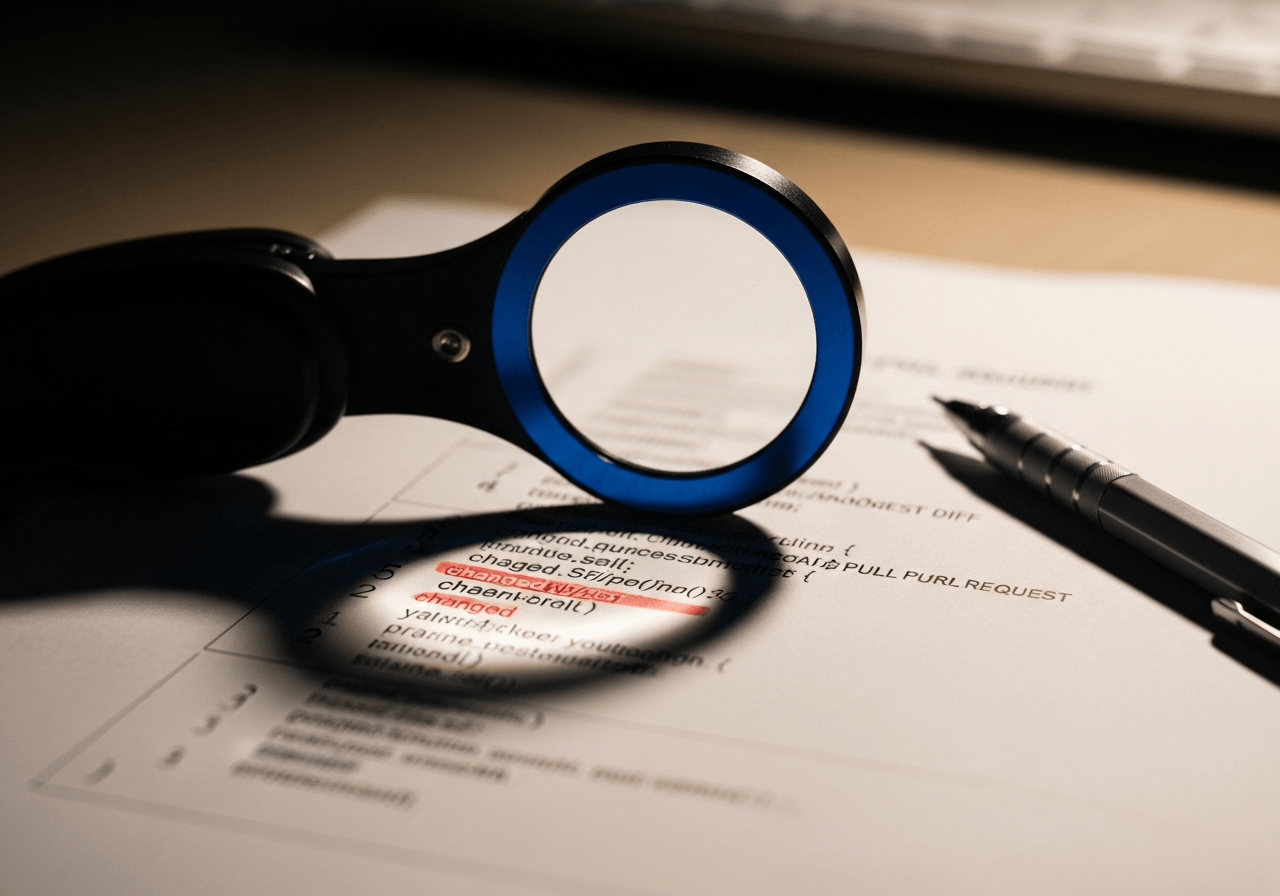

The agent system is designed to read the PR against the repo conventions, run the static checks, and draft the routine feedback as inline comments — so the senior reviewer opens the PR with the style, test, and security signals already on the page and spends the time on architecture and intent.

The problem

A platform team running disciplined code review is paying its most expensive engineers to flag the same patterns on every PR — a missing test, a swallowed exception, a style drift, a known anti-pattern from the repo conventions doc nobody re-reads. The signal is correct and the work is repetitive, and the reviewer who could be sharpening architecture is sharpening a checklist.

The job is not to replace the human reviewer. The job is to draft the routine feedback first — inline comments on the diff, fix suggestions where the fix is mechanical, the static-analysis output already triaged — so the reviewer arrives to read intent and design, not to point at line 42 again. One agent, Memory Bank carrying the repo conventions across the review, eval set tuned against the team's own historical PRs.

Agent architecture

The platform’s four pillars, mapped to the components this agent system actually exercises.

One agent. Reads the diff, applies the conventions, drafts the comments.

ADK · Agent Studio · Model Garden

Repo conventions and prior-PR context live in Memory Bank across the review.

Agent Runtime · Memory Bank

Source code stays in your repo. Every comment posted under a registered identity.

Agent Registry · Model Armor · Gateway

Eval set built from your team's historical PRs and reviewer accept rates.

Evals · Observability · Agent Analytics

Engagement · 3 weeks

Fixed scope, fixed price, fixed timeline. Here is what happens when.

Week 1

Discovery and reviewer-agent spec.

Walk recent PRs with the platform lead and a senior reviewer. Decide which signals the agent surfaces and which it suppresses. Capture the repo conventions doc as the agent's reference state.

Week 2

Reviewer agent and eval pass.

Build the ADK reviewer agent against your repo. First eval pass on a sampled set of historical PRs against the reviewer accept-or-reject signal. Tighten prompts and tool calls against the eval results.

Week 3

PR pipeline wiring and shadow run.

Wire the agent into the PR pipeline. Shadow-run on real PRs for the week with reviewer feedback flowing back into the eval set. Hand off the repo, the eval harness, and the runbook to the platform lead.

What it looks like in code

The actual shape of the code your team owns at engagement end. Real ADK, real tools, real instruction copy.

agents/code_reviewer/agent.py

python

from google.adk.agents import LlmAgentfrom google.adk.tools import FunctionToolfrom .tools import ( fetch_pr_diff, fetch_repo_conventions, run_static_analysis, post_review_comment,)reviewer = LlmAgent( name="code_reviewer", model="gemini-2.0-pro", instruction=( "You draft the routine review feedback on a pull request: missing " "tests, swallowed exceptions, style drift against the repo " "conventions, static-analysis findings worth surfacing. Post " "comments inline on the diff. Suppress signals the conventions " "doc explicitly accepts. Architecture and intent are the human " "reviewer's call, not yours." ), tools=[ FunctionTool(fetch_pr_diff), FunctionTool(fetch_repo_conventions), FunctionTool(run_static_analysis), FunctionTool(post_review_comment), ],)What you walk away with

Every blueprint hands the engineering team a deployed agent and the artefacts to run it themselves. No black box, no lock-in.

Two weeks. Named scope. Working agent on Agent Runtime at the end.

Code

Lives in your Git org, owned from commit one.

Governance

Model Armor and Agent Registry on day one.

Speed

Two weeks to a runnable pilot. Eight to production.

Not ready to talk? Take the 4-min readiness assessment